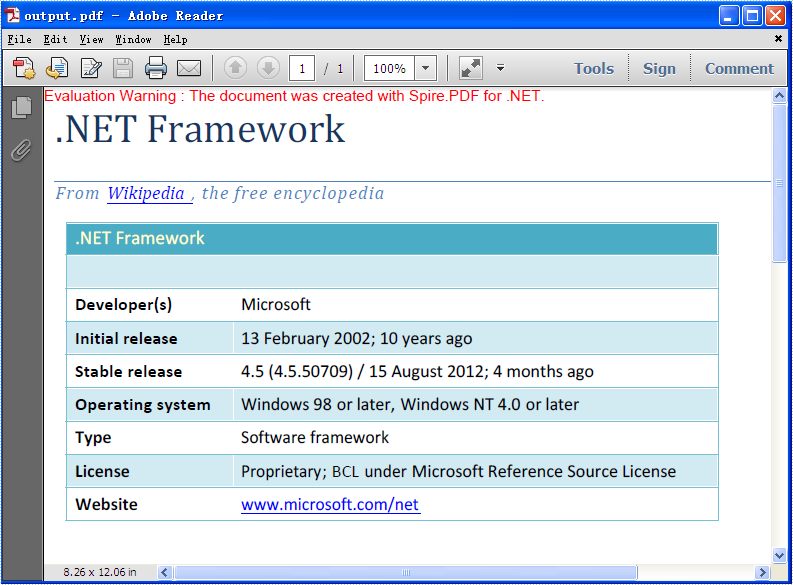

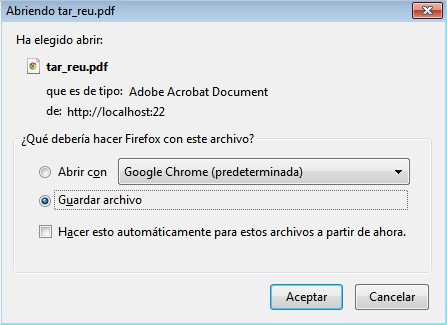

Open/Save WebBrowser Control Dialog Box. Visual C# https. I'm using a webbrowser control and i need that when an user click on a link that could. May 27, 2012 I am developing a windows application where i need to convert the web Browser content to PDF file. For Example: WebBrowser1.Navigate('www.google.com'); i can see the google home page in the webbrowser content. Now i need to export that google home page which is in webbrowser control to PDF file. Can any one help me.

It seems that no one has actually written the code to save the contents of a page, including images, as rendered by the.NET webbrowser control. And the ShowSaveAsDialog provided by the WebBrowser control sucks - it doesn't return the filename, and it doesn't work - try saving a Google page with a search filled in, and you get just the Google home page - it doesn't save with the parameters specified. Now, of course there's this helpful approach (from StackOverflow): So my approach revised would be: Use System.NET.HttpWebRequest to get the main HTML document as a string or stream (easy). Load this into a HTMLAgilityPack document where you can now easily query the document to get lists of all image elements, stylesheet links, etc. Then make a separate web request for each of these files and save them to a subdirectory.

Finally update all relevent links in the main page to point to the items in the subdirectory. But what amazes me is that no one has posted code that does this, at least that I can find.

Why is working with WebBrowser such a PITA? Anyways, if someone has some code for saving a web page without using ShowSaveAsDialog, please point me in the right direction. OK, very good. I actually suggested a way to parse it.

Apparently, this is unavoidable. Actually, it depends on how deep do you need to scrap, as it can eventually get anywhere on the whole Web. If you want to scrap only the URIs used to render just one page, it will only include the URIs loaded as pictures, JavaScript files and contents of frame. Some downloads could give random or unpredictable results, some won't be reached (for example, media streams). If you need just this, the approach could be HTML spying and collecting all URIs requested. When answering a question please:.

Read the question carefully. Understand that English isn't everyone's first language so be lenient of bad spelling and grammar. If a question is poorly phrased then either ask for clarification, ignore it, or edit the question and fix the problem. Insults are not welcome. Don't tell someone to read the manual. Chances are they have and don't get it.

Provide an answer or move on to the next question. Let's work to help developers, not make them feel stupid.

RSS Feed

RSS Feed